" The ministers of foreign affairs and other officials from the P5+1 countries, the European Union and Iran meeting for a Comprehensive agreement on the Iranian nuclear programme." A March 2015 meeting in Switzerland (Credit: Wikipedia)

Two recent polls have come to quite different conclusions about the public’s reaction to the nuclear deal with Iran, signed by the United States and five other countries. Neither poll does a really good job of measuring what the public was actually thinking at the time the interviews were conducted, however. One poll no doubt overstates support, while the other most likely overstates opposition.

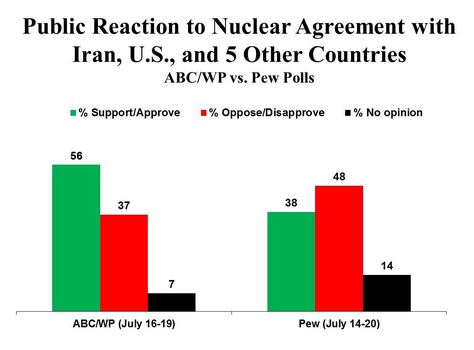

The ABC/Washington Post poll reports strong majority support, while a Pew poll shows a plurality of Americans opposed.

The major reason for the difference in results is that ABC/WP provided positive information about the agreement, while Pew provided no substantive information either favorable or unfavorable.

The question wording for each question is shown here:

ABC/WP: “As you may know, the U.S. and other countries have announced a deal to lift economic sanctions against Iran in exchange for Iran agreeing not to produce nuclear weapons. International inspectors would monitor Iran’s facilities, and if Iran is caught breaking the agreement, economic sanctions would be imposed again. Do you support or oppose this agreement?”

Pew: “How much, if anything, have you heard about a recent agreement on Iran’s nuclear program between Iran, the United States and other nations?” [Pew then asked:] “From what you know, do you approve or disapprove of this agreement?”

Note that nothing in Pew’s question suggests the agreement is either good or bad, nor does it tell the respondents what the agreement is all about. In general, that approach to asking respondents about their views is clearly preferable to the ABC/WP approach.

The problem with giving information, as did the ABC/WP question, is that once the respondents have heard that view of what the agreement is all about, the sample no longer represents the general public – which has not been given that particular description of the agreement. Thus, we cannot treat the opinion measured by the poll as representative of the general public, but only as representative of the respondents in the poll itself – after being given very specific information.

Furthermore, it is virtually impossible not to bias the question with such information. The ABC/WP description is certainly compatible with what supporters of the deal would say, but opponents might well describe the deal differently – emphasizing what they believe would be the negative consequences of the deal, such as freeing up $100 billion in assets that Iran could use to further fund terrorist organizations, like Hamas and Hezbollah.

There are many other arguments and counter arguments to be made, of course, but the point is that describing the agreement in solely positive terms clearly biases the results that ABC/WP obtained.

Still, the Pew poll has problems of its own. Initially Pew asked how much respondents had heard of the agreement and found that just over one-third (35 percent) had heard “a lot,” with another 44 percent saying “a little,” and the rest (21 percent) saying nothing at all. Pew went on to ask everyone in the sample whether they approved or disapproved of the agreement, and then filtered the responses to include everyone who said they had heard “a lot” or “a little” about it. (See the graph above for those results.)

The unfiltered results (based on everyone in the sample, including those who had not heard about the agreement at all) showed a somewhat greater negative result than the filtered results: 33 percent approve, 45 percent disapprove, and 22 percent expressing no opinion. That’s a 12-point negative plurality, compared with 10 points in the filtered figures.

The main problem with Pew’s approach is that the pollster used a forced-choice question (approve or disapprove, with no explicit option for someone to say they didn’t have an opinion), and then failed to measure intensity. As I’ve shown in many previous posts, some respondents will give an opinion because they are pressured to do so in the context of the survey, but will immediately indicate in a follow-up question that they don’t really care if the opposite happens to what they’ve just said.

ABC/WP also used a forced-choice question, but did follow-up with an intensity question – asking if respondents felt “strongly or somewhat” about the issue. The results show that 31 percent feel strongly in favor, while 30 percent feel strongly opposed. That leaves a plurality, 39 percent, who don’t feel strongly about the agreement, one way or the other. (While these results, I believe, provide a more realistic picture of public opinion, neither the Post nor ABC gave them any attention. One has to search the topline document to find them.)

It would be useful to know how intensely Pew’s respondents felt about their views. No doubt many of them, especially those among the 44 percent who said they had heard “only a little” about the agreement, would admit that they don’t have strong views, giving us a more realistic picture of how engaged the public actually is. By pressuring respondents with little information to come up with an opinion, and then not measuring how strongly they feel about the issue, Pew produces results that no doubt overstate both support and opposition.

Both media organizations seem loath to acknowledge that a large proportion of the public is unengaged on this (or any other) issue. That’s why they use forced-choice questions, sometimes provide information to respondents, and either don’t measure or don’t report how strongly people feel about their views.

But to understand the public, it’s important to know not just (objectively) how many people are in favor and how many are opposed to a policy, but also how many people are not even engaged.