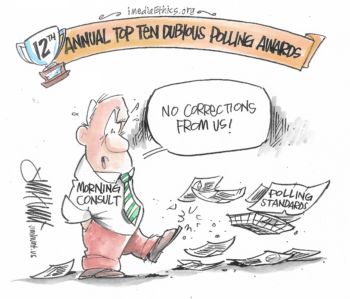

(Credit: Jim Hunt for iMediaEthics)

Editor’s note: Every January, iMediaEthics’ polling director David W. Moore assembles the top ten “Dubious Polling” Awards for iMediaEthics. The tongue-in-cheek awards “honor” the previous year’s most questionable actions in media polling news. The 2019 awards are the 12th in this series.

10. Sleeping at the Switch Award

Winner: Fox News Poll, for its tendentious question that all but tells the respondent what to answer. “Do you think Americans rely too much on the government and not enough on themselves?”

Doesn’t that sound like the pollster just wants you to say “yes”? In fact, respondents did, by a 61% to 30% margin.

Think what the margin might have been if the question had been balanced: “Do you think Americans rely too much on the government and not enough on themselves, OR do you think Americans rely mostly on themselves and rely on the government for help only when needed?”

Ensuring questions are balanced is something pollsters learn in Polling 101.* How did the Fox pollsters, who have been give an A- rating by Nate Silver’s 538, let this question through?

Someone was asleep at the switch…

*For a discussion of unbalanced questions, “response acquiescence,” and other question wording problems, see Chapter 8, “The Elusive Pulse of Democracy,” in David W. Moore, The Superpollsters: How They Measure and Manipulate Public Opinion in America (New York: Four Walls Eight Windows, 2nd Edition, 1995), pp. 325-358.

9. Getting Out Over Their Skis Award

Winner: CBS Battleground Tracker of Convention Delegates for projecting a delegate count of Democratic candidates through Super Tuesday six months before Super Tuesday actually happens.

OK…we know the media like to predict the future, but this was ridiculous! The combined poll of the first cut-out states (Iowa, New Hampshire, Nevada and South Carolina – each held approximately a week apart from each other), combined with results of some 14 states on Super Tuesday, is based on a faulty underlying assumption – that all voters cast their ballots at the same time. They don’t. It’s a sequential process. What happens in Iowa can influence what happens in New Hampshire, and so on. And, by Super Tuesday, many candidates in September won’t even be running. Polling all the states at one time to see who’s ahead is…getting out over the skis.

By the way, CBS projected Beto O’Rourke to have 34 delegates, Kamala Harris, 16. Three months later, both O’Rourke and Harris were no longer candidates.

Oops!

8. Forgetting to Read the Fine Print Award

Winner: New York Post for claiming that a Fox News poll was wrong when it showed most Americans favoring impeachment of President Trump. The alleged error was that the poll had too few Republicans and independents.

As it turned out, as the poll’s methodology section revealed, the poll had plenty of Republicans and independents. The New York Post reporter just didn’t know how to read polls, but blithely went ahead with an “analysis” of the results anyway.

Next time, read…

7. You Can’t Really Be Serious! Award

Winner: The Economist/YouGov November poll that asked its respondent to answer 256 questions – for free!

Think about that for a moment. Would you do that?

The November poll was supposed to be a sample of respondents who represented the American adult population. What kind of people are willing to do that?! A very dedicated and, one might surmise, unusual group of homo sapiens.

Do we think such poll junkies are truly typical of the American population?

Yes, I know – the pollsters weight the data to make the sample look like the larger population. But really – 256 questions?

They can’t be serious!

6. Say It Ain’t So Award

Winner: Chuck Todd of NBC’s Meet the Press, for sparking a discussion about a presidential candidate’s internal poll.

What was Chuck thinking?! Certainly, in beginning journalism he would have learned that selective release of information from a candidate-sponsored poll cannot be trusted. Such polls are intended to manipulate the news. And this one certainly did.

Starbucks billionaire Howard Schultz was contemplating an independent run for president, and his internal poll allegedly showed him getting 17% of the vote, in a race between President Trump and Sen. Kamala Harris (who were “essentially tied”).

Chuck was very “impressed,” suggesting the poll showed there was “room” for an independent candidate in the presidential race.

Chuck, how could you?

5. The Hat Trick of Bad Question Wording Award

Winner: USA Today/Suffolk University poll, for its Trump-inspired question on the merits of the Mueller investigation.

The poll found “Half of Americans say Trump is victim of a ‘witch hunt’ as trust in Mueller erodes.”

The question: “President Trump has called the Special Counsel’s investigation a ‘witch hunt’ and said he’s been subjected to more investigations than previous presidents because of politics. Do you agree?” The results: 50% agree, 47% disagree, 3% unsure.

- Unbalanced – no option of “disagree” mentioned (Do you agree or disagree?)

- Leading – tells people Trump called the investigation a “witch hunt,” instead of just asking if respondents consider it one

- Double barreled – asks if people agree about witch hunts and, in the same question, if Trump has been subjected to more investigation than previous presidents (one can agree with one item and disagree with the other)

A perfect hat trick of errors!

4. We All Stink at Polling Award

Winner: All the polling companies in Australia predicting a Labor win in the April 2019 Federal Election.

As The Guardian noted after the election:

“Ipsos, Newspoll, and Guardian Australia’s Essential Poll all went into Saturday’s election predicting a two-party-preferred result of 51-49 to Labor. None of the 16 opinion polls published between when the election was called on 10 April and polling day on 18 May predicted a Coalition win; most gave an even higher margin to Labor.”

In a post-election analysis, a Guardian columnist, Nobel Laureate Professor Brian Schmidt wrote:

“Based on the number of people interview, the odds of those 16 polls coming in with the same, small spread of answers is greater than 100,000 to 1. In other words, the polls have been manipulated, probably unintentionally, to give the same answers as each other. The mathematics does not lie…

“The last five years have demonstrated to me the fragility of democracy when the electorate is given bad information. Polls will continue to be central to the narrative of any election. But if they begin to emerge as yet another form of unreliable information, they too will be opened up to outright manipulation, and by extrapolation, manipulation of the electorate. This is a downward spiral our democracy can ill afford.”

Amen.

3. Woulda Coulda Shoulda Award

Winner: “Embarrassed pollster,” Australia’s Chris Lonergan of Lonergan Research, who “ripped up poll that showed Labor losing the election.”

As though to prove Professor Schmidt’s claim that the polls were manipulated (see Award #4), The Sydney Morning Herald reported on Lonergan’s admission:

“Given that we felt it [the poll] was out of step with the general sentiment that we had seen reported in the media, we were concerned that the report might not be accurate and made a decision not to publicly release the data…

“No one wants to release a poll that is wildly out of step…We didn’t want to be seen as having an inaccurate poll.”

He would have been the only pollster correctly predicting the outcome of the election.

Hmm…if only he woulda taken his own poll seriously, he coulda been the only pollster to get it right, something he definitely shoulda done.

2. Putting Words in the Voters’ Mouth Award

Winner: Siena College Research Institute, its horrendously biased poll about changes in New York state government.

Conducted in mid-March, the poll ostensibly wanted to measure voters’ views on whether the government had moved “too far to the political left.” But the very question the poll asked almost begged respondents to agree with that point of view.

Here are the ways the poll put words in the voters’ collective mouth:

- The poll informed the voters that “Since January, New York State government, the Governor and both houses of the State Legislature, has been controlled by the Democrats.” There was no need to inform voters of these facts, if the intent is to find out what the voters think – not what the pollster want them to think.

- The poll used an agree/disagree question, which pollsters know is a biased way to ask questions. It leads to “response acquiescence,” the tendency of people who don’t have an opinion to agree rather than disagree.

- The crucial agree/disagree question reads: “New York State is now moving too far to the political left.” First, the statement is vague – no mention of any specific policies, just an ambiguous statement that can be interpreted in any way the pollster eventually decides. And, second, the question tells the respondent the state is moving to the political left – the only issue is whether it’s “too far” or not. Perhaps the respondent didn’t even “know” the state had moved to the political left. The point of poll questions is to find out what the respondent knows, not what the pollster wants respondents to say.

That Siena College poll could have been designed in a non-partisan, objective way, had the pollster been inclined. Here’s how:

- Do not inform respondents about which party controls state government. The point is to find out what actual voters perceive, and giving them information simply biases the sample so that it no longer represents the larger population of voters.

- If the vague question wording about moving left is to be retained, ask this question first: “As far as you know, in the past three months have state government policies moved to the political left, to the political right, or have they remained the same as they were previously – or are you unsure?” (It would probably be better to talk about policies becoming more liberal, or more conservative, rather than moving to the left or to the right.)

- For those who say the policies have moved “left” or “right,” ask: “Have they moved too far [left/right], or about the right amount – or are you unsure?”

Polls can be used to measure what voters are actually thinking. And, as Siena College has shown, they can also be used to create the illusion of public opinion by putting words into respondents’ mouths.

1. Admitting Mistakes is for Losers Award

Winner: Morning Consult poll, for its misleading report on the government shutdown and border wall poll – and its refusal to either acknowledge the problem online or correct it, much less apologize for the error.

Yes…it’s true, that in politics these days, many political leaders feel it’s better never to admit error. Facts don’t matter. Just double down on being right, regardless.

But so far that has not been an acceptable modus operandi in polling. Yet…

On January 23, Politico (Morning Consult’s polling partner) reported that only 7% of voters supported funding for a border wall if it was the only way to end the government shutdown, while 72% were opposed. This was hardly credible – certainly more than 7% of voters supported President Trump’s demand for border wall funding!

The poll actually provided evidence that 46% of voters were willing to fund a border wall to end the shutdown. Politico’s mistake was caused by the topline and tables provided by Morning Consult, which failed to indicate that the 7% figure applied only to voters initially opposed to funding for a border wall.

Once advised of the error, Politico immediately acknowledged the error online and corrected it, including both their topline and crosstabs.

To this date, however, Morning Consult has not corrected its topline/crosstabs online.

When I asked the company’s Director of Communications, T. Anthony Patterson last January, why a correction had not been made, he insisted all data online were correct, and if the reader misinterpreted the results (he was referring to me, although Politico had also misinterpreted the data), that was the reader’s fault.

Yup…never admit error, never correct faulty data, never apologize. Only losers admit they make mistakes.

So, there!

_________________